|

8/16/2023 0 Comments Airflow docker kubernetes

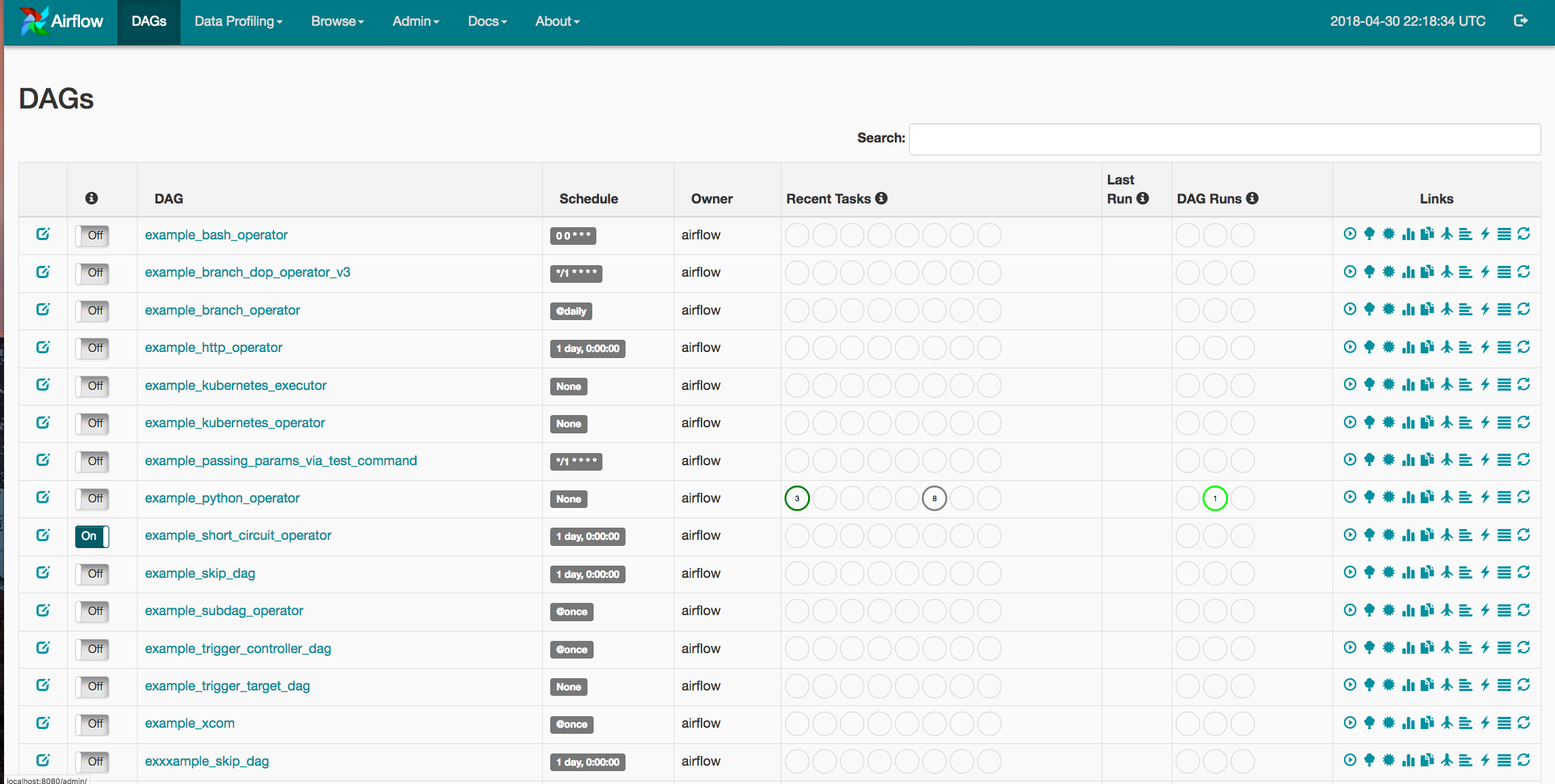

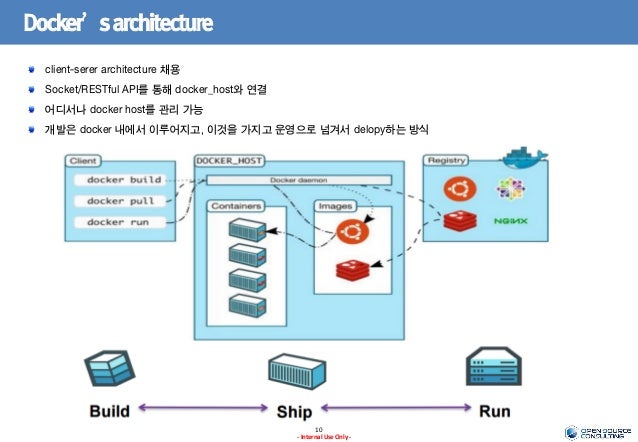

How to configure the KubernetesPodOperator.The requirements for running the KubernetesPodOperator.By abstracting calls to the Kubernetes API, the KubernetesPodOperator lets you start and run Pods from Airflow using DAG code. Users will have the choice of gathering logs locally to the scheduler or to any distributed logging service currently in their Kubernetes cluster.The KubernetesPodOperator (KPO) runs a Docker image in a dedicated Kubernetes Pod. Once the job is launched, the operator only needs to monitor the health of tracklogs (3). Images will be loaded with all the necessary environment variables, secrets, and dependencies, enacting a single command. Kubernetes will then launch your pod with whatever specs you’ve defined (2). This means that the Airflow workers will never have access to this information, and can simply request that pods be built with only the secrets they need.Īrchitecture The Kubernetes Operator uses the Kubernetes Python Client to generate a request that is processed by the APIServer (1). With the Kubernetes operator, users can utilize the Kubernetes Vault technology to store all sensitive data. At every opportunity, Airflow users want to isolate any API keys, database passwords, and login credentials on a strict need-to-know basis. Usage of kubernetes secrets for added security: Handling sensitive data is a core responsibility of any DevOps engineer.Custom Docker images allow users to ensure that the tasks environment, configuration, and dependencies are completely idempotent. If a developer wants to run one task that requires SciPy and another that requires NumPy, the developer would have to either maintain both dependencies within all Airflow workers or offload the task to an external machine (which can cause bugs if that external machine changes in an untracked manner). Flexibility of configurations and dependencies: For operators that are run within static Airflow workers, dependency management can become quite difficult.Now, any task that can be run within a Docker container is accessible through the exact same operator, with no extra Airflow code to maintain. On the downside, whenever a developer wanted to create a new operator, they had to develop an entirely new plugin. The following is a list of benefits provided by the Airflow Kubernetes Operator:Īirflow’s plugin API has always offered a significant boon to engineers wishing to test new functionalities within their DAGs. Any opportunity to decouple pipeline steps, while increasing monitoring, can reduce future outages and fire-fights. It also offers a Plugins entrypoint that allows DevOps engineers to develop their own connectors.Īirflow users are always looking for ways to make deployments and ETL pipelines simpler to manage. Airflow comes with built-in operators for frameworks like Apache Spark, BigQuery, Hive, and EMR. When a user creates a DAG, they would use an operator like the “SparkSubmitOperator” or the “PythonOperator” to submit/monitor a Spark job or a Python function respectively. The Kubernetes Operatorīefore we move any further, we should clarify that an Operator in Airflow is a task definition. Airflow users can now have full power over their run-time environments, resources, and secrets, basically turning Airflow into an “any job you want” workflow orchestrator. To address this issue, we’ve utilized Kubernetes to allow users to launch arbitrary Kubernetes pods and configurations. This difference in use-case creates issues in dependency management as both teams might use vastly different libraries for their workflows.

A single organization can have varied Airflow workflows ranging from data science pipelines to application deployments. However, one limitation of the project is that Airflow users are confined to the frameworks and clients that exist on the Airflow worker at the moment of execution. Airflow also offers easy extensibility through its plug-in framework. Airflow offers a wide range of integrations for services ranging from Spark and HBase, to services on various cloud providers. Since its inception, Airflow’s greatest strength has been its flexibility. Register here now! Why Airflow on Kubernetes? If you would like to attend a Data Engineer’s Lunch live, it is hosted every Monday at noon EST. The live recording of the Data Engineer’s Lunch, which includes a more in-depth discussion, is also embedded below in case you were not able to attend live.

In Data Engineer’s Lunch #47, we will use Kubernetes to deploy Airflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed